SpeakEasy: Breaking Language Barriers with Real-Time AI Translation

Introduction

Real-time multilingual communication is still one of the biggest friction points in global collaboration. Teams can connect instantly over video, but language differences continue to reduce clarity, speed, and confidence in live conversations.

To address this, I led the development of SpeakEasy, an AI-powered voice translation platform that enables seamless real-time communication across languages.

The objective was to create a production-grade system that could deliver low-latency speech recognition, context-aware translation, and natural voice output at global scale.

Project Overview

SpeakEasy was designed as an end-to-end communication platform for multilingual interactions across business, travel, healthcare, and education.

The platform listens to live speech, transcribes it, translates it with context awareness, and returns natural voice output in the target language in near real time.

Core Architecture

Microservices Backend

I built a scalable backend using Node.js and Express.js with service boundaries optimized for:

- Audio ingestion and preprocessing

- Speech recognition orchestration

- Translation routing and model selection

- Voice synthesis and playback delivery

- Session management and analytics

This modular architecture made it easier to optimize latency-sensitive paths and scale services independently.

Real-Time Streaming Layer

To support live interactions, SpeakEasy uses:

- WebRTC for real-time audio transport

- WebSocket channels for low-latency event and transcript updates

This combination enables interactive conversations with synchronized text and voice output.

Frontend Experience

The UI was built in React.js with real-time caption rendering and responsive session controls, allowing users to follow translated conversations with minimal cognitive overhead.

Key Technical Features

1. Speech Processing Pipeline

The speech layer combines Google Speech-to-Text and Whisper AI to improve recognition accuracy across accents and language conditions.

Implementation highlights:

- Custom FFmpeg preprocessing for cleaner audio input

- Adaptive normalization for varied microphone quality

- Sub-second recognition under normal network conditions

2. Translation Engine

The translation layer uses multiple providers and models with failover support:

- DeepL and Google Translate for broad language coverage

- OpenAI and Gemini for context-aware refinement

- Routing logic for specialized language-pair handling

This hybrid approach improved reliability and quality compared to single-provider designs.

3. Voice Synthesis

For translated speech output, SpeakEasy integrates:

- Amazon Polly

- OpenAI text-to-speech

Users can configure voice characteristics such as gender and accent, while buffering optimizations keep playback smooth during live sessions.

4. Offline Translation Support

SpeakEasy also includes offline capabilities for constrained environments:

- Lightweight translation models for offline processing

- Efficient local caching strategies

- Seamless online/offline switching logic

Technical Challenges and Solutions

Challenge 1: Latency Optimization

Real-time translation requires strict latency management. I reduced round-trip latency to under 500ms by:

- Running recognition and translation in parallel where possible

- Optimizing stream packet handling

- Minimizing serialization and network overhead

Challenge 2: Scalability

To support growth and usage spikes, I implemented:

- Horizontally scalable services

- Load-balanced routing paths

- Query and cache optimizations for persistent state

Challenge 3: Audio Quality Under Real Conditions

User devices and environments vary significantly, so audio resilience was critical:

- Adaptive noise handling

- Automatic gain control

- Custom preprocessing tuned for live conversational speech

Impact and Results

SpeakEasy delivered measurable performance and adoption outcomes:

| Metric | Result |

|---|---|

| Global Adoption | 10,000+ users across 50+ countries |

| Translation Accuracy | 95% across 100+ language pairs |

| Meeting Efficiency | 60% faster communication in multilingual sessions |

| Reliability | 99.9% uptime |

| Usage Volume | 1M+ minutes of translated audio processed |

These outcomes show strong practical value for real-world multilingual collaboration.

Technical Stack

Frontend

- React.js with TypeScript

- WebRTC for live media transport

- WebSocket for real-time state and transcript updates

- Material UI for responsive interface components

- Redux for predictable state management

Backend

- Node.js and Express.js microservices

- WebSocket servers for live signaling and events

- FFmpeg for audio preprocessing

- MongoDB for persistence and session data

AI and ML

- Whisper AI and Google Speech APIs for transcription

- TensorFlow for custom model workflows

- OpenAI and Gemini integration for context handling

- NLP pipelines with transfer learning strategies

Cloud and DevOps

- AWS Lambda, Firebase, and GCP deployment architecture

- Docker containerization

- Kubernetes orchestration

- GitHub Actions CI/CD pipelines

Future Development

Active roadmap priorities include:

- Improved context awareness with advanced NLP

- Emotion detection and tone matching

- Real-time accent adaptation

- Expanded offline language and model support

Professional Skills Demonstrated

This project required end-to-end execution across:

- Full-stack product engineering

- Real-time system architecture

- AI/ML integration and optimization

- Cloud infrastructure and distributed deployment

- High-reliability API and service design

Conclusion

SpeakEasy demonstrates how real-time AI translation can move from concept to production impact when performance, architecture, and user experience are engineered together.

By combining low-latency streaming, multi-model translation intelligence, and scalable cloud infrastructure, the platform makes multilingual communication faster, more inclusive, and more effective in everyday global collaboration.

Related Projects

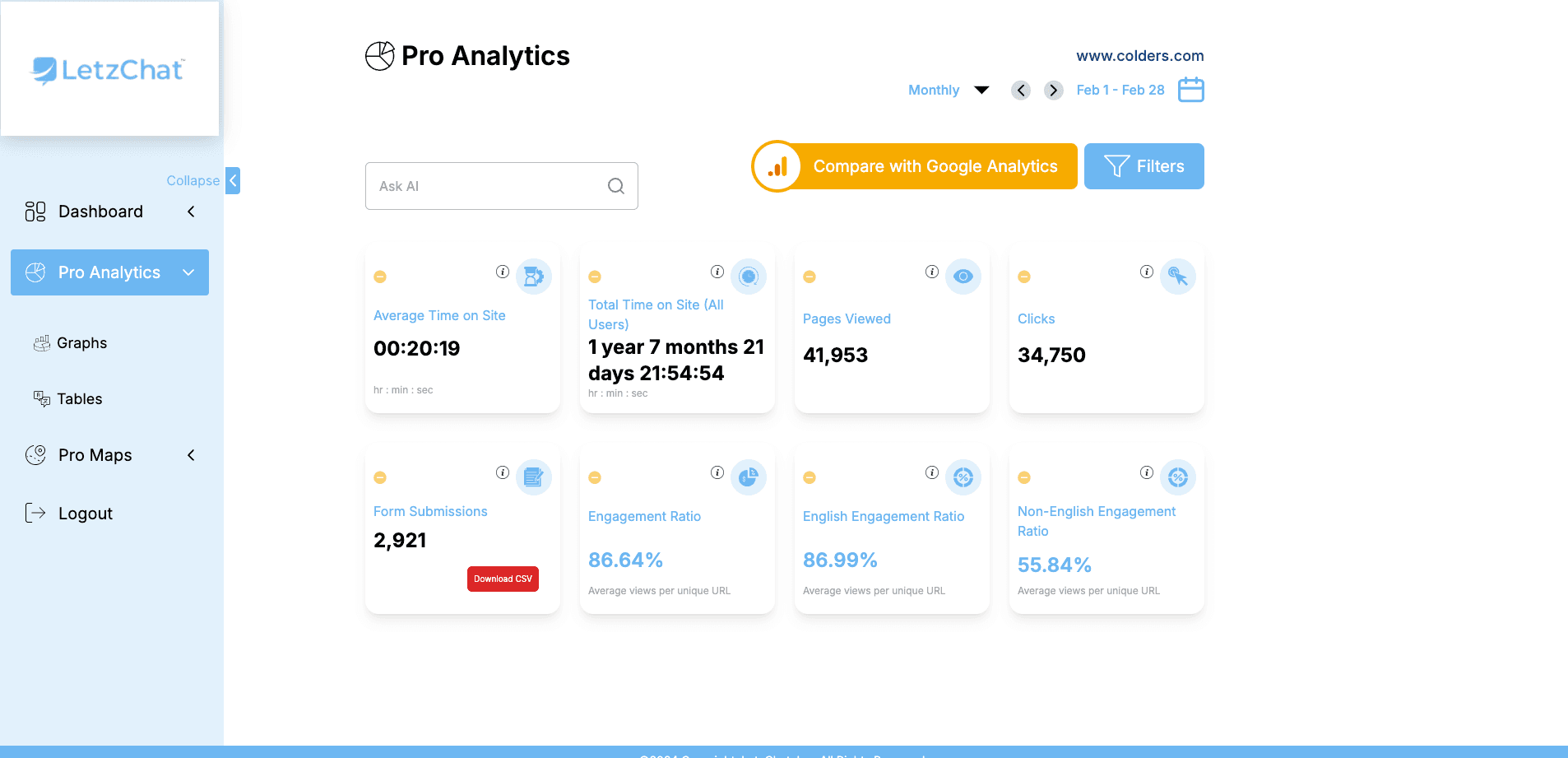

LetzChat – Enterprise Multilingual Translation & Communication Platform

Complete enterprise translation ecosystem — featuring real-time analytics (300M+ events/month), AI-powered chat, voice/video dubbing, live call translation, podcast/Zoom integration, glossary management, subtitle generation, and comprehensive analytics — breaking language barriers across all communication channels.

VoiceDubbing.ai – AI-Powered Voice Dubbing for Seamless Multilingual Audio

AI-powered dubbing SaaS platform that delivers multilingual, emotionally expressive voiceovers with automation, lip-sync precision, and cloud-scale processing.

AI Calling Agent with Admin Dashboard for Doctors

AI-powered healthcare communication platform combining an intelligent voice bot with an admin dashboard for appointment workflows, campaign control, and real-time call analytics.

Related Articles

CASA App: A Revolution in Multilingual Social Networking

A case study on CASA App, a real-time multilingual social platform built with Node.js, Socket.io, React, and AWS to enable seamless cross-language communication.

Unite: Breaking Language Barriers Through Real-Time Translation

A technical case study on Unite, a real-time multilingual communication platform that enables live presentations and audience participation across different languages.

Breaking Language Barriers: Revolutionizing Global Communication in Virtual Meetings

How the Zoom Meeting Live Translation Captions System uses Whisper AI, AWS, and real-time translation pipelines to enable multilingual participation in virtual meetings.