Video Dubbing and Voice Cloning System: AI-Powered Content Localization

Introduction

Video is the most effective format for global communication, but language barriers still limit content reach. Traditional dubbing workflows are expensive, slow, and difficult to scale across multiple languages.

To solve this, I led the development of an AI-powered Video Dubbing and Voice Cloning System that translates videos while preserving the original speaker's voice characteristics and emotional tone.

The goal was to help creators and businesses localize content faster without losing authenticity.

Project Overview

This platform was built as an end-to-end localization pipeline for multilingual video distribution.

It handles:

- Speech recognition and speaker analysis

- Translation into target languages

- Voice cloning with emotion-aware synthesis

- Lip-sync and timeline alignment

- Batch processing and quality review workflows

The system enables global content distribution with significantly lower turnaround time and cost.

Core Technical Challenges and Solutions

1. Voice Recognition and Analysis

Live and recorded media include variable audio quality, different speaking styles, and multi-speaker contexts. I implemented a recognition layer that:

- Detects speaker traits and speech patterns

- Extracts tone and emotion markers

- Handles multiple speakers within the same video

- Maintains timing references for subtitle and dub alignment

2. Voice Cloning and Emotion Preservation

The platform needed to sound natural in the target language while keeping speaker identity intact. The voice synthesis pipeline was designed to:

- Generate natural-sounding cloned voices

- Preserve emotional cues and delivery style

- Adapt outputs for language and cultural context

- Support downstream lip-sync compatibility

3. Lip-Sync and Frame Alignment

Dubbing quality depends on synchronization. I built a timing adjustment workflow that aligns translated speech with source pacing and video frames to reduce perceptual mismatch.

System Architecture

Cloud Infrastructure

The solution runs on scalable cloud infrastructure:

- AWS Lambda for serverless orchestration and event-driven processing

- Amazon EC2 for compute-intensive voice and media tasks

- DynamoDB for metadata, job state, and processing history

- Custom Node.js APIs for workflow control and platform integration

Processing Pipeline

The dubbing engine follows a staged architecture:

- Ingest source media and extract audio tracks

- Identify speakers and transcribe speech segments

- Translate text with context-aware language handling

- Synthesize target-language cloned voice output

- Align dubbed audio with timeline and lip movement constraints

- Run quality checks and publish localized assets

This design keeps workflows reliable and scalable for high-volume production use.

Technology Stack

Frontend

- React

- HTML5

- JavaScript

Backend

- Node.js

- Python

AI and ML

- ChatGPT

- Whisper AI

- Custom voice cloning models

Cloud

- AWS Lambda

- Amazon EC2

- DynamoDB

Media Processing

- FFmpeg

- Custom dubbing pipeline components

Key Features

- Automated gender and speaker recognition

- Real-time capable voice cloning pipeline

- Multi-language dubbing support

- Emotion-preserving speech synthesis

- Automated lip-sync adjustment

- Batch processing workflows

- Quality assurance and review controls

Results and Impact

The platform delivered strong measurable outcomes:

| Metric | Result |

|---|---|

| Dubbing Time | Reduced by 70% |

| Production Cost | Reduced by 60% |

| Voice Similarity Accuracy | 95% |

| Supported Target Languages | 10+ |

| Processed Content | 1000+ hours of video |

These results show that AI dubbing can be both cost-efficient and quality-preserving at scale.

Role and Responsibilities

As Team Lead and Developer, I was responsible for:

- Architecting the full system end to end

- Selecting and integrating core technologies

- Leading implementation with developers and AI specialists

- Collaborating with linguists for translation quality

- Optimizing performance, scalability, and reliability

- Designing quality control and validation workflows

Lessons Learned

This project reinforced several critical engineering principles:

- Real-time media systems require strict latency and buffering strategy

- AI quality depends on strong post-processing and review design

- Cross-cultural localization is both a technical and linguistic challenge

- Scalable architecture decisions must be made early in the project

Future Enhancements

Planned next steps include:

- Expanding language coverage

- Improving emotion detection precision

- Increasing real-time processing capabilities

- Enhancing accent preservation

- Extending support to mobile-first workflows

Conclusion

This Video Dubbing and Voice Cloning System demonstrates how AI can transform media localization from a manual bottleneck into a scalable production capability.

By combining voice intelligence, synchronization workflows, and cloud-native architecture, the platform enables creators to deliver multilingual video experiences faster, more affordably, and with higher consistency.

Related Projects

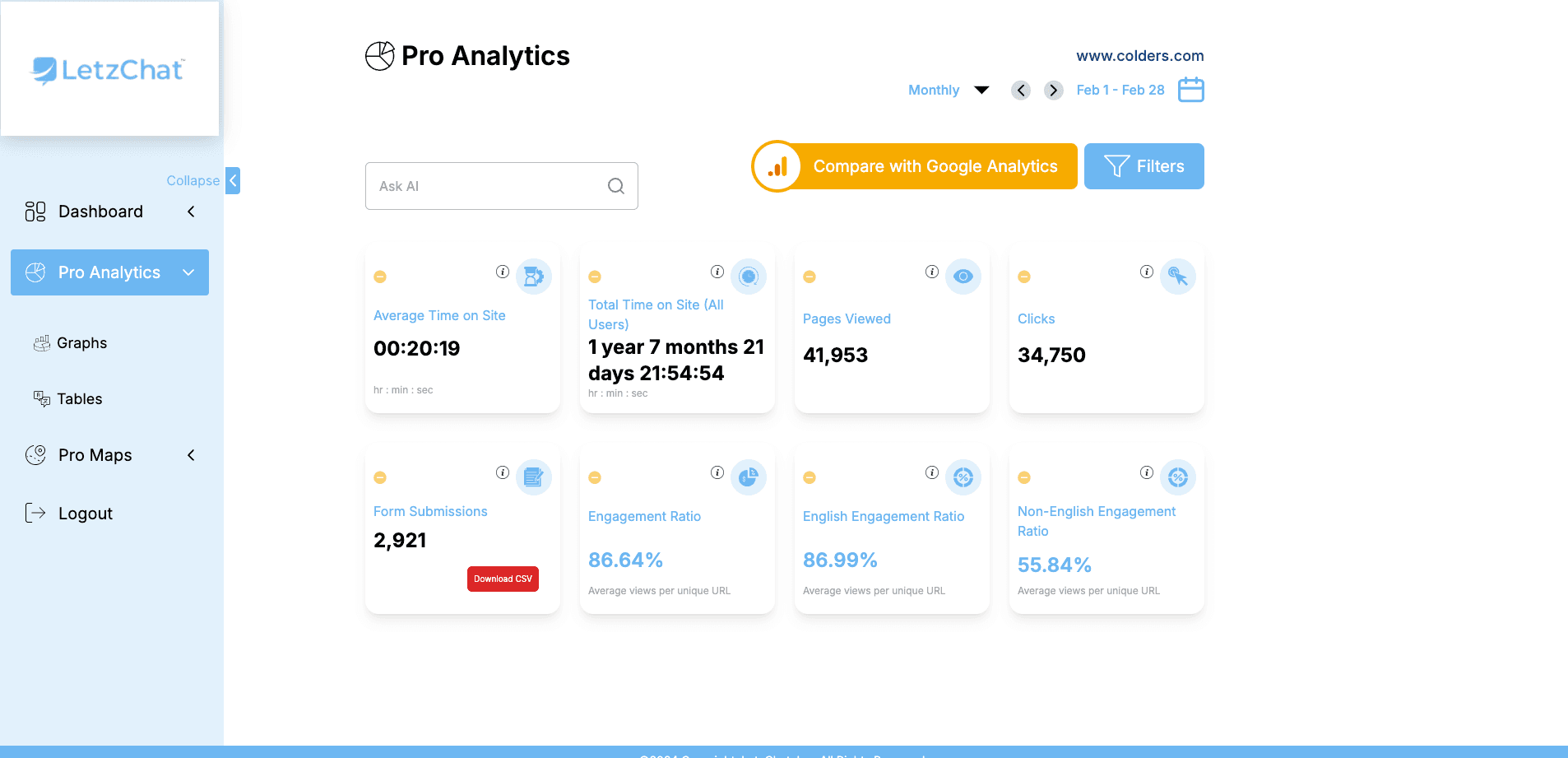

LetzChat – Enterprise Multilingual Translation & Communication Platform

Complete enterprise translation ecosystem — featuring real-time analytics (300M+ events/month), AI-powered chat, voice/video dubbing, live call translation, podcast/Zoom integration, glossary management, subtitle generation, and comprehensive analytics — breaking language barriers across all communication channels.

AI Calling Agent with Admin Dashboard for Doctors

AI-powered healthcare communication platform combining an intelligent voice bot with an admin dashboard for appointment workflows, campaign control, and real-time call analytics.

Levate.ai - AI-Driven Hotel Revenue Optimization Platform

Advanced AI-powered hospitality revenue platform built to maximize hotel profitability through dynamic pricing, smart upselling, and real-time market intelligence.

Related Articles

VoiceDubbing.ai: AI-Powered Voice Dubbing for Seamless Multilingual Audio

A complete case study on VoiceDubbing.ai, an AI dubbing platform built with FastAPI, Next.js, and neural voice models to deliver expressive, multilingual, lip-synced audio localization at scale.

Breaking Language Barriers: Revolutionizing Global Communication in Virtual Meetings

How the Zoom Meeting Live Translation Captions System uses Whisper AI, AWS, and real-time translation pipelines to enable multilingual participation in virtual meetings.

GenderRecognition.com: AI-Driven Gender Detection for Smarter Insights

Building a state-of-the-art AI platform that provides accurate, scalable, and privacy-compliant gender recognition solutions across multiple industries using deep learning, computer vision, and multi-modal AI.